Vigilance: Behaving Safely During Routine, Novel, and Rare Events

| An experienced laborer was killed when a gasoline storage tank exploded as he was cutting the tank with a portable power saw. The worker's company installed, removed, and junked gasoline pumps and underground tanks. Although the worker had extensive experience in this line of work, this time he failed to adequately purge the tank and test for vapors. The explosion propelled the worker 10 to 15 feet from the tank into another tank. (Fatal Facts/www.osha.gov) |

Many workers have to make decisions or take actions that keep the workplace safe. Various quality checklists are used to help the worker make good decisions, as well as increase observation skills in addressing larger unsafe conditions of work. Such tools and exercises as pulse-check or safety culture surveys, hazard identification, and barrier removal exercises are intended to help the worker address an event that could be a possible incident in the making. In the tragic story above, circumstances resulting in incidents (sometime catastrophic) happen when a pattern of behavior is very routine, having occurred for years without a problem.

Understanding how to increase worker attentiveness to everyday experiences, as well as to the novel or rare event, is critically important in maintaining a safe workplace.

This article begins by defining what is meant by “attending” in keeping workers safe. Creating safe habits that are maintained, even when no one is looking, requires high rates of reinforcement for observing accurately and acting correctly in all kinds of circumstances. Understanding hazards and their implications requires a plan of action. The vigilant worker may be overloaded with data trying to filter routine information. The challenges include what to do with unexpected or rare events when there is little prior history about what actions are best and, more confusing, contrary signals that are ambiguous or unclear about what to attend to.

The reinforcing elements of daily decisions by those at the top are crucial to keeping workers safe. Establishing a corporate culture that understands what it means to be alert, where everyone can report concerns and take action sends a strong signal. Understanding that experience as the definition of what makes for safe practice is insufficient. Everyone needs to understand that knowing what is right and doing what is right are two different things. Understanding leaders’ roles in preparation and practice is central to how safe the workplace remains.

Defining Attending in Safety Situations

Attending goes beyond simply “seeing” something to analyzing its importance. The issue of seeing and yet not being attentive to danger is more than a failure to understand. Attending implies that appropriate action is taken. So safe attending requires: 1) seeing or observing in some way (using one or more of the five senses), 2) understanding the significance of what is seen, and 3) acting or not acting on the situation.

Increasing Attending

Usually conditions requiring attending in the moment have little to do with assigning the ideal person to do the job. The person reporting potential hazards, seeing a rare error, or tracking frequent data streams requiring constant vigilance, acts under the same scientific laws of behavior that affect all of us: the relevance of what is being observed and the payoff for attending to one kind of event over another. The job of those who place workers in potentially unsafe conditions is to teach the workers relevant skills in how to be the most diligent observers possible in what circumstances and, most importantly, what to do immediately in unsafe conditions.

Usually conditions requiring attending in the moment have little to do with assigning the ideal person to do the job. The person reporting potential hazards, seeing a rare error, or tracking frequent data streams requiring constant vigilance, acts under the same scientific laws of behavior that affect all of us: the relevance of what is being observed and the payoff for attending to one kind of event over another. The job of those who place workers in potentially unsafe conditions is to teach the workers relevant skills in how to be the most diligent observers possible in what circumstances and, most importantly, what to do immediately in unsafe conditions.

Making good choices is at the core of successful attending and involves both signal detection and decision processing. Prior learning about how to make and sustain correct decisions is important. Other relevant elements of attending include the visibility (sound, color, hue, location) and variability (random or constant) of the signals detected, as well as the meaning of events in a particular setting (e.g., significance, duration, frequency, urgency). Strength of responding is heightened by the novelty involved in doing something new and can be lessened by overexposure to repeated patterns of behavior leading to habituation (aka boredom) and, over time, the reduction and/or elimination of even long-established patterns of behavior.

Signal detection theory (SDT) assumes that actions are controlled by both a) the extent that relevant signals and related actions are clear (sensitivity/ability to discriminate the signal) and b) bias in regard to the costs and benefits (payoffs) of making one choice over another. These sensitivity and bias filters are plotted on a matrix to identify hits and errors in detecting false and true signals. Sensitivity to the signal, that is, recognition of its presence, and the bias or the observer’s likelihood of reporting the presence or absence of the signal leads to what is defined as the accuracy of detecting the signal. The assumptions of what will happen in this model are based on antecedent variables, necessary initiators to get behavior going. However, to maintain actions needed, the individual’s history of positive, negative, or punishing consequences for responding after detecting the signal will control the degree to which actions are taken to amend or act on the signal, be it a hazard or a near miss or any other event related to variation or concern the employee may have related to his working environment. SDT research teaches that rare events are more likely to be detected if the payoff for a correct detection is high. Recognize such behavior.

Competing Contingencies

Research that generalizes from SDT shows that a person’s skills in accurately discerning the facts and then acting on those facts in an appropriate manner are key steps in effectively identifying safety hazards. However, workers’ actions are strongly influenced by the likely payoff or lack thereof in identifying hazards or reporting near misses and so on. The cultural and managerial practices that offer reinforcement or punishment for responding are active influencers in detecting and acting upon signals.

| Massey Energy owned and operated Upper Big Branch Mine where 29 miners were killed in April 2010. The Mine Safety and Health Administration found that the company's culture of favoring production over safety contributed to flagrant safety violations that caused the coal dust explosion. It assessed $10.8 million in fines for 369 citations and orders, the largest for any mine disaster in U.S. history. Alpha Natural Resources additionally settled Massey's potential criminal liabilities for $209 million. (Massey Energy. Wikipedia. 2012) |

In relation to some of the most incredible stories of failing to protect the worker, self-interest plays an undeniable role in the choices made by individuals outside the danger zone. This place is where leaders often reside, and attention to potential danger when outside that danger zone requires special alertness to reviewing every decision in terms of how it might impact the worker on a daily basis. Massey’s leadership made various post-hoc justifications for failing to ensure there were more than adequate air quality sensors and adequate ventilation available, incredible and detailed errors documented of Massey negligence of its workforce.

Decisions made in this case and in others are influenced by more than just accuracy in gathering facts. Massey leadership took actions based on what they considered to be most critical to them, what they were reinforced for doing—and in this case, it appears that what was most reinforcing was producing the most coal possible with minimal investment in workers’ health and safety. They had no outside voice reviewing their decisions with them, minimal practice in listening to and responding quickly to worker concerns, and little evidence that they trusted or wanted the worker to stop work for the sake of safety.

Skill in Identifying Threat

Practical analysis of how a person decides to make one choice over another also applies to when and if individuals identify safe or unsafe conditions in the environment correctly. In a nutshell, accuracy of judgment in identifying error and reinforcement for the actions taken are keys to keeping the environment safe. Dr. Don Hantula and others offer science-based evidence that what we think of as rational and good decisions are not necessarily well thought out, often are made based on old data or protection of the status quo, and are rarely evaluated by others before being accepted, especially for leaders. Exploring novel ideas or gathering new data before deciding is rarely done in a systematic way. New information can be put in the hands of the decision maker. However, depending on the amount of time perceived to reach new decisions, many ignore the new information for what was learned even decades earlier, assuming the end result will benefit from this experience base. We reinterpret new information against that frame of reference as well. Often decision makers are reinforced for their clear and immediate ability to tell others what they have decided. Being fast is mistaken for being right—and all too often the failures are not identified early in the process of following such decisions.

Practical analysis of how a person decides to make one choice over another also applies to when and if individuals identify safe or unsafe conditions in the environment correctly. In a nutshell, accuracy of judgment in identifying error and reinforcement for the actions taken are keys to keeping the environment safe. Dr. Don Hantula and others offer science-based evidence that what we think of as rational and good decisions are not necessarily well thought out, often are made based on old data or protection of the status quo, and are rarely evaluated by others before being accepted, especially for leaders. Exploring novel ideas or gathering new data before deciding is rarely done in a systematic way. New information can be put in the hands of the decision maker. However, depending on the amount of time perceived to reach new decisions, many ignore the new information for what was learned even decades earlier, assuming the end result will benefit from this experience base. We reinterpret new information against that frame of reference as well. Often decision makers are reinforced for their clear and immediate ability to tell others what they have decided. Being fast is mistaken for being right—and all too often the failures are not identified early in the process of following such decisions.

There are other kinds of decision errors that are common to the best of leaders, and the more we are asked to make decisions as senior leaders, the worse we actually become at doing so. That is, the experienced leader’s decisions become less likely to be right for a given situation than those informed by more recent or novel information, those who work in small groups to arrive at the decisions needed, or those who take an analytic and systematic approach, without the bias of needing to be quick to be good decision makers. How a person scans the workplace, the variables attended to and what is seen (versus attended to) can be very limited. So if accuracy in decision making is essential, a good process by which to check critical safety decisions is important, such as formal audits, team review, bias discussions, and exploring alternative solutions.

| A painter foreman climbed over a bridge railing to inspect work being done, slipped, and fell 150 feet to his death. (Fatal Facts/www.osha.gov) |

A different kind of decision making is often required based on immediacy of action. Lone workers in high-vigilance situations must be able to sort through the noise in the environment and separate out real signals of threat. They decide what is good for themselves, for fellow workers and how their immediate actions harm or help individuals in the work setting. What effect will failure produce? What are the risks to self and others? Most line workers understand that their decisions are critical to safety, and often are very skillful in doing the right thing. If they are attuned, they often are excellent in taking immediate action. However, these same workers engage in routine activity where they may not maintain constant safety vigilance.

| A three-man crew was digging a trench for a new sewer line using a poorly maintained backhoe. In addition to other problems, the backhoe’s starter button did not work, and the safety catch on the gear shift was broken. The operator used a screwdriver to engage the starter and get the engine going. When the gear shift engaged, the vehicle lurched forward, running over and killing one of the crew members. This company had a safety and health training program but, according to post-accident analysis, the company had not adequately attended to safe work practices. (Fatal Facts/www.osha.gov) |

Infrequent contact with risky events can lead to significant misses. An event may appear so much like an earlier and similar event that workers may interpret current signals as of not much significance, just like something that occurred in the past and was, after all, safe. The decision then was to act. Why not now? In other words, “We’ve always done it this way and nothing bad has happened.” To help ensure that everyone has sufficient training to “see” potential dangers and to go through a decision process that requires examining observer bias among other things, managers must help workers increase skills in making small discriminations. Examples of altered processes or equipment malfunction or decisions that can affect performance can be built in to simulations to help the worker note subtle changes. It is important to track not only the common and usual practices through briefing about what occurs, but also the novel or rare changes in work that can indicate a potential safety hazard or behavioral pattern drift that can lead to accidents or errors.

Companies have to work hard to make workers comfortable enough to ask for the shutting down of equipment because they know (judgment call) that certain tolerance limits are near their end-point. To have an environment where managers truly desire that these decisions are in the hands of those who do the work requires a culture that respects the judgment of the worker and actively reinforces shutting down a line or a piece of equipment based on the worker’s assessment. This requires that there is no direct or indirect punishment for costs associated with such actions. Such behavior is very difficult to sustain if intimidation occurs. However, in many work settings, we find that workers who are active partners with their leaders in creating safe cultures expect such conditions and speak up if inconsistent messages are sent.

Setting up such a culture requires an open process of problem-solving in which employees are invited to bring their ideas to the table. The title of manager gives that individual no special advantage in making safe calls. These kinds of cultural imperatives require recognizing, acting on, and rewarding employees’ observing and reporting. Developing skill in process, workflow, and clear communication patterns in the workplace requires an open invitation to explore what works and what does not in regular reviews. In safety, good judgment is needed but, as importantly, an environment must be created where employees speak up readily. Such environments create the conditions where preventive attending occurs much more frequently.

Setting up such a culture requires an open process of problem-solving in which employees are invited to bring their ideas to the table. The title of manager gives that individual no special advantage in making safe calls. These kinds of cultural imperatives require recognizing, acting on, and rewarding employees’ observing and reporting. Developing skill in process, workflow, and clear communication patterns in the workplace requires an open invitation to explore what works and what does not in regular reviews. In safety, good judgment is needed but, as importantly, an environment must be created where employees speak up readily. Such environments create the conditions where preventive attending occurs much more frequently.

Infrequent Decisions

Often employees must make decisions in situations that occur infrequently. Both the lack of repetition and the time between opportunities may affect decisions. As we look to improve these skills, we must understand some contributing factors. For example:

- Do they assess situations concerning safety correctly?

- Do they take the correct action in the face of distractions?

- Are there other activities in the work setting that might contribute to “behavior drift?”

- Are the right behaviors less likely to occur because of the absence of reinforcement or the presence of punishment, particularly when raising questions?

| Two employees were attempting to adjust the brakes on a backhoe when one of the workers told the backhoe operator to raise the wheels off the ground using the front bucket and the outriggers, and then to put the backhoe in gear at idle and step on the brakes. The worker crawled under the machine to adjust the brakes. Within minutes, he was found dead under the backhoe with the hood of his rain jacket wrapped around the drive shaft. His neck had been broken when the jacket wrapped around the backhoe drive shaft. (Fatal Facts/www.osha.gov) |

The infrequently observed event that requires a decision is an issue of significance in establishing safe practices. While signal detection can help clarify the information needed and the outcome achieved, it does not teach employees or leadership about the process of decision making and what can help them make better decisions about potentially unsafe, albeit infrequent, or novel events. A better understanding of decision making can help all ensure that they attend to the right cues and the surrounding conditions necessary to help them be vigilant in the decisions they make. Again, exercises (using real data from real events whenever possible) with rare errors inserted can help workers recognize the relevance of attending while learning to address such infrequent “signals” more quickly. Remember, introducing ambiguous or novel events can be great teaching tools in that what to do may be unclear, as well as how to act and with what degree of urgency.

Schedules of Reinforcement:

A Method for Increasing Observer Accuracy Across Conditions

One way to make learning more effective is to understand how schedules of reinforcement can help. If you have a new learner who is just beginning to understand what you are asking and how to do it, you need to reinforce frequently, shape the response toward the desired end goal, and recognize each correct response until it occurs at a high and steady rate of accuracy. This is called continuous reinforcement, or shaping. Much of what is described herein is about variable-ratio schedule training—how often and for what actions is reinforcement applied. Such a schedule produces persistence in responding and has a dramatic impact on the strength and rate of the response. The goal of reinforcement is always to strengthen the behavior and increase the likelihood that it will occur again in the future.

One way to make learning more effective is to understand how schedules of reinforcement can help. If you have a new learner who is just beginning to understand what you are asking and how to do it, you need to reinforce frequently, shape the response toward the desired end goal, and recognize each correct response until it occurs at a high and steady rate of accuracy. This is called continuous reinforcement, or shaping. Much of what is described herein is about variable-ratio schedule training—how often and for what actions is reinforcement applied. Such a schedule produces persistence in responding and has a dramatic impact on the strength and rate of the response. The goal of reinforcement is always to strengthen the behavior and increase the likelihood that it will occur again in the future.

A problem seen by behavior safety and quality experts is the lack of a well thought out system to increase skills of accurately attending to relevant cues by workers. An example of a ratio schedule is defined below in terms of doing quality inspections—occasional clues appear in completing an audit puzzle. Air traffic control examples of teaching vigilance on ratio schedule are provided as well. These kinds of schedule-linked training events ensure better transfer of skills and retention. Well-defined schedules of reinforcement used systematically can increase discrimination by the worker among relevant and irrelevant data. Learning more about this important area can help the quality or safety engineer design in drills and practice that leads to highly fluent observation and address many issues related to discriminating what is important from what may not be as important. Schedules increase the accuracy of responding based on frequency, timing, and duration of behavior needed (in this case attending) and can help to create habits over time. Schedules of reinforcement are vital tools in refining observer responding. Planning to increase reinforcement for attending behaviors using schedules of reinforcement requires more than this short mention but is well worth exploring.

Ways to Increase Attending

Vigilance is just beginning to be researched in practical ways that can easily be translated to everyday workplace issues, but some of the best solutions have been developed by supervisors and others coming up with ideas in the field. One supervisor, for example, aware of the possibilities of serious safety incidents on his site, decided that he wanted to increase vigilance on quality site inspections/audits. He put clues (in the form of colored Post-it® notes) on parts of the equipment being inspected. The clues were needed to solve various puzzles in key areas (wiring, throttles, under the gas tank, etc.). When a Post-it was found, the inspector knew he was on the right path. If an inspection was completed, the worker was rewarded when all Post-it areas were addressed and/or inspected once the Post-its were brought back or, in some cases, when all the colors needed to address a certain concern were collected.

Vigilance is just beginning to be researched in practical ways that can easily be translated to everyday workplace issues, but some of the best solutions have been developed by supervisors and others coming up with ideas in the field. One supervisor, for example, aware of the possibilities of serious safety incidents on his site, decided that he wanted to increase vigilance on quality site inspections/audits. He put clues (in the form of colored Post-it® notes) on parts of the equipment being inspected. The clues were needed to solve various puzzles in key areas (wiring, throttles, under the gas tank, etc.). When a Post-it was found, the inspector knew he was on the right path. If an inspection was completed, the worker was rewarded when all Post-it areas were addressed and/or inspected once the Post-its were brought back or, in some cases, when all the colors needed to address a certain concern were collected.

Once the desired patterns for searching was set through frequent reinforcement—finding papers attached to almost all elements of the inspection process in a manner that required attending to the process, the schedule of reinforcement was thinned out, meaning, not every time an inspection was conducted did an odd piece of paper show up on all pieces of the audit (continuous). However, and importantly, the inspectors did not know when that might be true and when not. So they did indeed do more thorough inspections (still bringing back the papers that were placed “on occasion” by this very smart supervisor). This increased their reported and actual vigilance (variable-ratio schedule of reinforcement).

Homeland security agents in airports have used the intended error method of placing a foreign or forbidden object in a bag and seeing if that item is identified. They tell their agents they are doing this to heighten awareness; the workers may not know when that might occur and with what frequency or thoroughness (variable-ratio schedule). These experiments have been infrequent but they address observer vigilance and how such “inserted” stimuli can help with heightened attending. This practice is not new in industry and, thus far, there is little evidence of increased skills as a result of such tests, but how to reduce failure to detect the occasional error is just beginning to be explored systematically.

The Rare Event

Sometimes workers stop looking for something, even something highly significant, if that something almost never happens. Lack of constant attending across hours of watching is a challenge faced by food safety inspectors, air traffic controllers, railway workers, military guards of high risk perimeters, health care workers, employees across many settings. Heart rate monitors, sleep monitors, computer aids, and other electronic devices designed to ensure that workers are “able to attend” are available; however, scheduled bells signaling various actions, medical checklists, levers to press at key intervals to signal alertness help to know if the worker is awake, but they can’t assure that the worker is actively attending (observing, sorting the information, and acting).

Sometimes workers stop looking for something, even something highly significant, if that something almost never happens. Lack of constant attending across hours of watching is a challenge faced by food safety inspectors, air traffic controllers, railway workers, military guards of high risk perimeters, health care workers, employees across many settings. Heart rate monitors, sleep monitors, computer aids, and other electronic devices designed to ensure that workers are “able to attend” are available; however, scheduled bells signaling various actions, medical checklists, levers to press at key intervals to signal alertness help to know if the worker is awake, but they can’t assure that the worker is actively attending (observing, sorting the information, and acting).

Last year, in less than one month’s time, nine air-traffic controllers were investigated for suspicion of sleeping on the job or watching a movie while monitoring traffic. The Federal Aviation Administration (FAA) fired two of the controllers. Bill Voss, a former controller who now heads the non-profit Flight Safety Foundation, defends the system as having an unprecedented level of safety, but he blames such incidents on FAA policies that force controllers to work sleep-depriving schedules. He stated that the latest incidents are only symptoms of a decades-long problem. Air-traffic controllers, if tired, are at significant risk of insufficient reaction time in responding to danger signals, as are others such as tugboat captains and their crews:

| “Working offshore is dangerous; seamen face weather hazards, slippery decks, cold temperatures and other extreme conditions. But, working on a tugboat is especially risky. These small, but hardworking vessels are able to pull much larger ships and barges. While they are powerful, the small size of tugboats puts them at risk. If a tug needs to stop suddenly, the ship or barge being towed may continue to travel until it collides with the tug. In addition, tugboats often need to travel a considerable distance in order to do their job, so tugboats are designed to carry a lot of fuel. A lot of fuel means there is a high risk of a tugboat catching fire after a collision.” (The Young Firm: Maritime Attorneys) |

Individuals on shift work that have long hours to put in before breaks often go beyond what is safe. Pilots, flight crews, transportation drivers of varied sorts, and others often go beyond the limits of what is necessary to refresh and renew the ability of the body to function. The average office worker is subject to these issues of safety as well. Office bosses can require workers to work too late, or hard workers can stay beyond their tolerance, driving home in weather or lighting conditions where fatigue adds to the likelihood of errors or accidents. No one is safe from the drain that comes from lack of sleep.

On an oil rig 200 miles out on the gulf, a crew was on what was called 36-hour duty—they did take brief naps but the rest cycle was inadequate for making pipe placement decisions and keeping the crew safe. Nevertheless, it was considered machismo to work long hours, and those who needed sleep were labeled weak sisters and not suited for the requirements of the job by a few of their peers. Rest is an imperative of attending but culturally, Americans sometimes label it as a sign of female weakness, particularly if observed in males. Most, but not all, can laugh it off, and for some to avoid being so labeled is worth risking it all. Such bullying workplace cultures and tactics need to be addressed and changed. Just as bullying in our public schools is hurting children in unforeseen ways, bullying at work is a devastating barrier for many in acting safely. This issue is rarely addressed as directly as needed.

In driving to productivity, the workplace and its leadership can require endurance that is beyond sane human capacity. When interviewed, leaders in such situations almost always say they understand the need to rest and they don’t require such effort—but, in fact, the unintended consequences of how pay and hours are distributed, how goals are set, and how people are allowed to make a judgment call or not about their own alertness—all that builds the belief that to keep the job requires such endurance. It is perhaps a misunderstanding, but the only ones who can change that are the leaders themselves in how they recognize and reward careful use of the most important factor in success at work—the employees behaving as best they can in high or potentially high-risk areas.

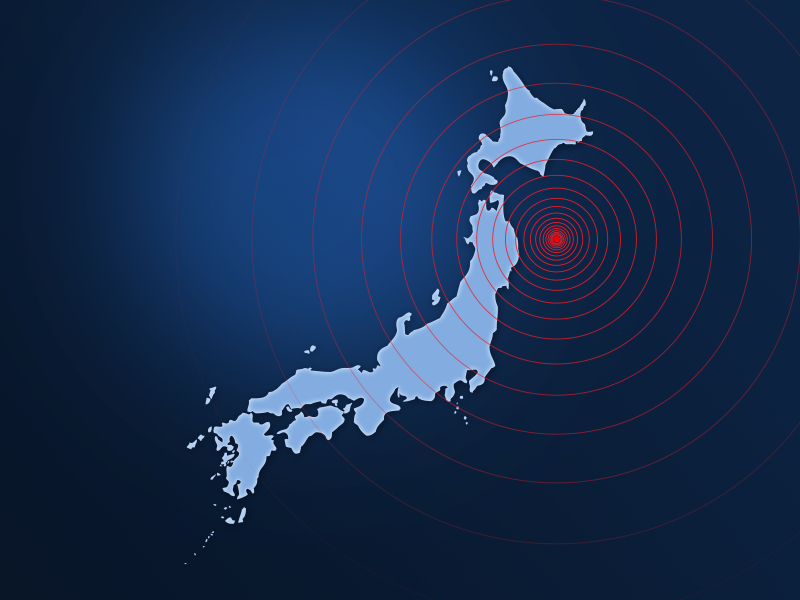

Preparation

The meltdown disaster of Japan’s Fukushima nuclear plant site in 2011 illustrates the need for vigilance but, even more so, it illustrates the need for what if scenarios and preparation for what might happen with nuclear plants in production at the sea’s edge. It is easy to make post-hoc judgments, but in deep mining and nuclear plant construction, the role of preparation in anticipation of unforeseen crisis is imperative. What if scenarios—doomsday scenarios—about highly improbable events help: “What could happen if a tsunami came on land at 40.5 meters (120 feet) high during a magnitude 9 earthquake, hitting vital nuclear facilities built close to the shore?” The odds of such simultaneous events happening, if considered, were most likely calculated to be almost unimaginable. However, such doomsday scenarios may be the only way to really be prepared for the unthinkable. In the aftermath of the tragedy, Yoshihiko Noda, Japan’s prime minister, admitted that the country was caught off guard. He acknowledged that the workers were unprepared for such an event and conceded to the lax emergency standards at the country’s power plants. He listed on-site problems such as incompatible electrical plugs, collapsed roads, and a lack of action plans.

The meltdown disaster of Japan’s Fukushima nuclear plant site in 2011 illustrates the need for vigilance but, even more so, it illustrates the need for what if scenarios and preparation for what might happen with nuclear plants in production at the sea’s edge. It is easy to make post-hoc judgments, but in deep mining and nuclear plant construction, the role of preparation in anticipation of unforeseen crisis is imperative. What if scenarios—doomsday scenarios—about highly improbable events help: “What could happen if a tsunami came on land at 40.5 meters (120 feet) high during a magnitude 9 earthquake, hitting vital nuclear facilities built close to the shore?” The odds of such simultaneous events happening, if considered, were most likely calculated to be almost unimaginable. However, such doomsday scenarios may be the only way to really be prepared for the unthinkable. In the aftermath of the tragedy, Yoshihiko Noda, Japan’s prime minister, admitted that the country was caught off guard. He acknowledged that the workers were unprepared for such an event and conceded to the lax emergency standards at the country’s power plants. He listed on-site problems such as incompatible electrical plugs, collapsed roads, and a lack of action plans.

Closer to our shores, an assessment of the Twin Towers indicates that not all office workers were required to participate in exit drills. Communication requirements with each floor, and how and in what order to exit handicapped individuals were not considered sufficiently, including the possible need to use stairs only. What stair exits were linked by floors below and how to accessvarious patterns were not practiced to habit strength by all workers. Preparing for the unthinkable is often just that—unthinkable. Very few of us thought about planes at high speeds hitting the towers. However, for certain environmental or structural issues, vigilant workers will never be enough. Those who plan and prepare must be ever vigilant, even after buildings are constructed, tracks are laid, or mines are dug.

Conclusion

Building sustainable safe habits involving careful attending (seeing, understanding, and acting) offers significant behavioral, as well as physiological, challenges—challenges that we, as behavioral specialists, are very interested in addressing. The science of behavior has much to offer in creating a vigilant workforce but to achieve that, leaders must understand the science so that practical and cost-effective solutions can be implemented. The issue of vigilance will become more important in the future as work processes are increasingly computer controlled and highly reliable. When the science of behavior and its relationship to safe practice is well known, reactive management will be replaced by actions based on analysis of the contributions of the physical environment, the management environment, and the behavioral conditions required to create a workplace where rare errors are limited to acts of nature.

Building sustainable safe habits involving careful attending (seeing, understanding, and acting) offers significant behavioral, as well as physiological, challenges—challenges that we, as behavioral specialists, are very interested in addressing. The science of behavior has much to offer in creating a vigilant workforce but to achieve that, leaders must understand the science so that practical and cost-effective solutions can be implemented. The issue of vigilance will become more important in the future as work processes are increasingly computer controlled and highly reliable. When the science of behavior and its relationship to safe practice is well known, reactive management will be replaced by actions based on analysis of the contributions of the physical environment, the management environment, and the behavioral conditions required to create a workplace where rare errors are limited to acts of nature.

Published April 12, 2012

References

Buck, L. (1968). Experiments on railway vigilance devices. Ergonomics, 11 (6), 557-564.

Carter, N., Hansson, L., Holmberg B. & Melin, L. (1980). Shoplifting reduction through the use of specific Signs. Journal of Organizational Behavior Management, 2 (2), 73-84.

Crowell, C.R. & Anderson, D.C. (2005). On reinventing OBM: Comments regarding Geller’s proposals for change. Journal of Organizational Behavior Management, 24 (1-2), 27-53.

Hogan, L. C., Bell, M., & Olson, R. (2009). A preliminary investigation of the reinforcement function of signal detections in simulated baggage screening: further support for the vigilance reinforcement hypothesis. Journal of Organizational Behavior Management, 29, 6-18.

Hurrell, J. Jr. & Colligan, M.J. (1987): Machine pacing and shiftwork, Journal of Organizational Behavior Management, 8 (2), 159-176.

Lawrence R. Murphy Ph.D. (1987): A review of organizational stress management research, Journal of Organizational Behavior Management, 8 (2), 215-228.

Mason, M.A. & Redmon, W.K. (1992). Effects of immediate versus delayed feedback on error detection accuracy in a quality control simulation. Journal of Organizational Behavior Management, 13 (1), 49-83.

McCarley, J.S., Kramer, A.F., Wickens, C.D., Vidoni, E.D., & Boot, W.R. (2004). Visual skills in airport-security screening. Psychological Science, 15 (5), 302-306.

Methot, L.L. & Huitema, B. (1998). Effects of signal probability on individual differences in vigilance. Human Factors: The Journal of the Human Factors and Ergonomics Society, 40 (1), 102-110.

O’Connor, R.T., Lerman, D.C., Fritz, J. & Hodde, H. Effects of number and location of bins on plastic recycling at a university. Journal of Applied Behavior Analysis, 43 (4), 711-715.

Snyder, Gail. (2009) Decision making in uncertain times: An Interview with Don Hantula PM eZine at www.pmezine.com